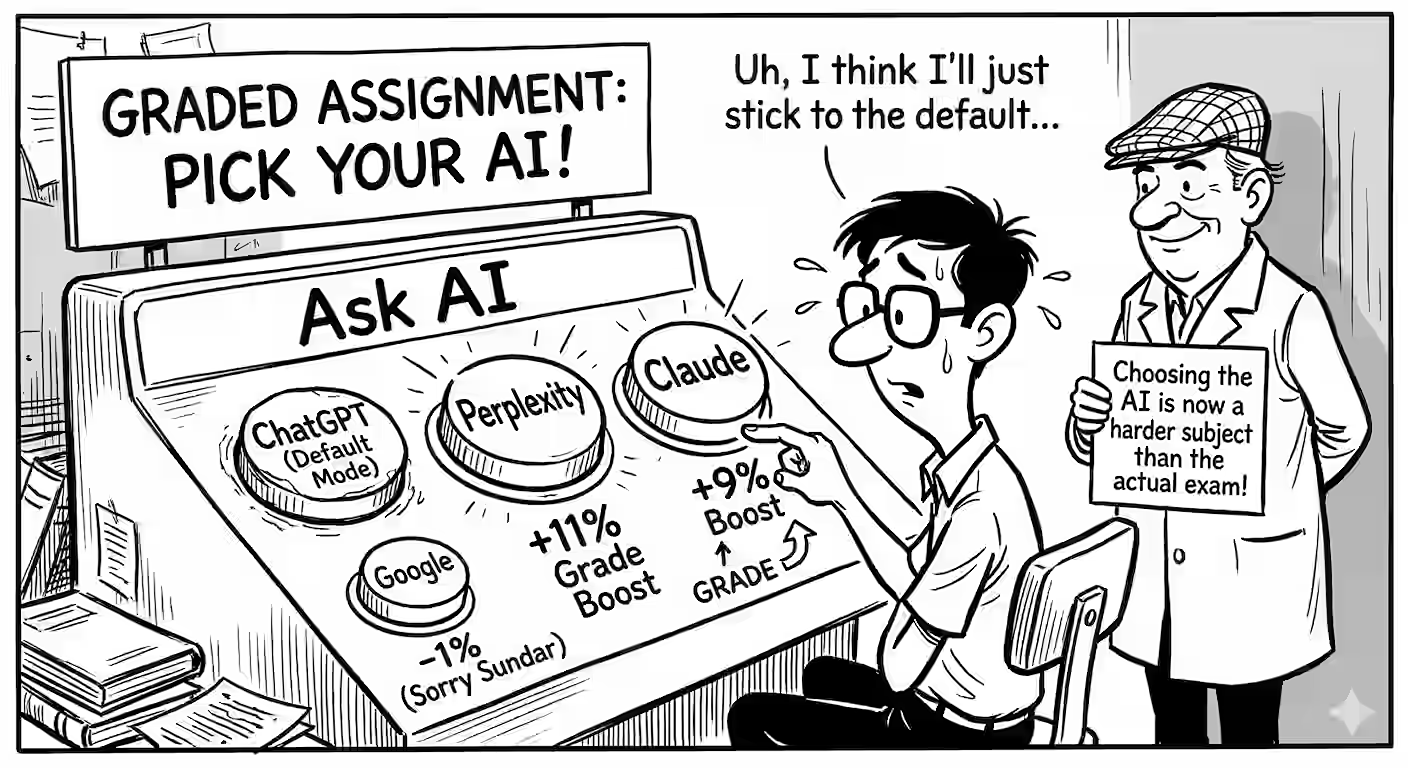

In my graded assignments students can pick an AI and “Ask AI” any question at the click of a button. It defaults to Google AI Mode, but other models are available. I know who uses which model and their scores in each assignment.

I asked Codex to test the hypothesis whether using a specific model helps students perform better.

The short answer? Yes. Model choice matters a lot. Across 333 students, here’s how much more/less students score compared with ChatGPT:

- Perplexity: increases scores by ~11% points (99.8% sure it has an impact).

- Claude: +9% (99.5% sure)

- Clipboard:, i.e. just copying to the clipboard and pasting in their choice of AI: +9% points (97.5% sure)

- Google: -1% (Not sure. Sorry, Sundar.)

If you are still using default ChatGPT, you are leaving nearly a full letter grade on the table compared to Claude or Perplexity users.

I also looked at exam timing. Do specific models perform better under last-minute pressure?

Statistically, no. The model-by-timing interaction isn’t significant (92% sure). A good model won’t save a rushed submission any better than a bad one.

But procrastination does hurt, regardless of which AI they frantically prompt. In the first graded assignment (GA1), there was a negative correlation (-37%, 100% sure) between submission time and final score.

BTW, I correlated with the most-used model. I can’t guarantee they used that specific model on every single attempt.

Of course, correlation isn’t causation. Maybe Claude writes better code. Or perhaps the kind of student who consciously switches from ChatGPT to Perplexity or Claude is just a better, more engaged student.

But a 9-to-10 point bump is a pretty good reason to experiment.