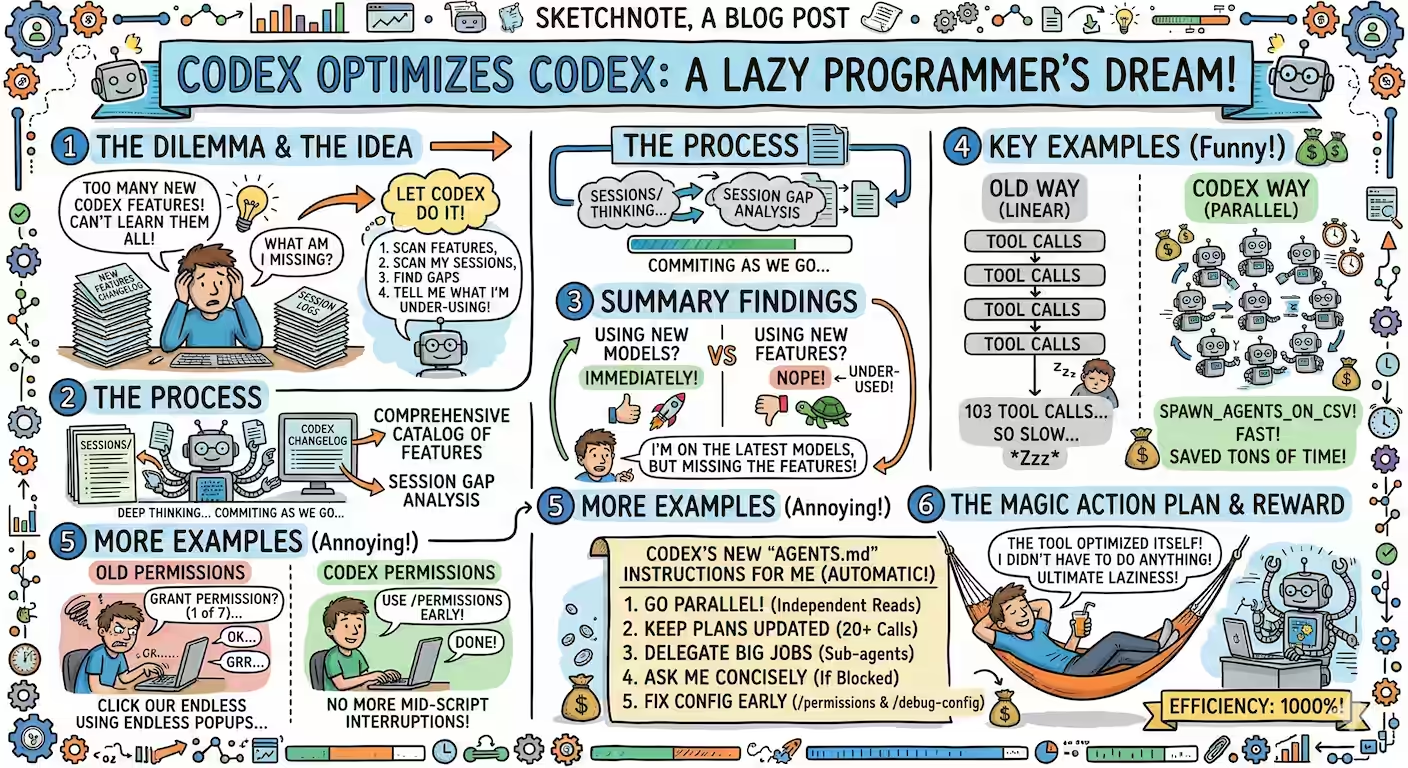

Instead of learning and applying new Codex features, I asked it to analyze my sessions and tell me what I’m under-using.

I'd like you to analyze my Codex sessions and help me use Codex better.

sessions/ has all my past Codex sessions.

Search online for the OpenAI Codex release notes for the latest features Codex has introduced and read them - from whatever source you find them.

Then, create a comprehensive catalog of Codex features.

Then, analyze my sessions and see which feature I could have used but didn't and make a comprehensive list.

Then summarize which features I should be using more, how, what the benefits are, and with examples from my sessions.

Document these in one or more Markdown files in this directory. Write scripts as required. Commit as you go.

It did a thorough job of listing all the new features and analyzing my gaps.

Here’s the summary: I’m using new models immediately, but not the new features of Codex. For example:

- Parallel execution. Yesterday, I ran ~103 tool calls without the new spawn_agents_on_csv feature from last week, which would have saved a lot of time running in parallel.

- Permissions. Last week, I ran a script that asked me for permissions 7 times towards the end. Instead, I could have used

/permissionsto set early permissions.

The best part is that it could just add a few instructions to my AGENTS.md:

Run multiple independent reads in parallel.

For 20+ tool calls, maintain update_plan throughout.

For long-running commands/tests, delegate via sub-agents and report checkpoints.

If blocked by permissions, ask me concise choices.

If sandbox/config gets in the way, use /permissions and /debug-config early.

Now, the beauty is that the tool optimized itself. I don’t even need to learn how to optimize it!