ABOUT ME

aliases: Anand, Bal, Bhalla, Stud, Prof.

Vidya Mandir. IITM. IBM. IIMB. LBS.

Lehman. BCG. Infy Consulting. Gramener. Straive.

More about me.

CONTACT ME

whatsapp: +91 9741 552 552

phone: +65 8646 2570

e-mail: [email protected]

social: LinkedIn | GitHub | YouTube

WORKING WITH ME

To invite me to speak, please see my talks page.

For advice, see time management, career or AI advice. Else mail me.

To work with me on projects, please send a pull request.

GET UPDATES

RSS Feed. Visit “Categories” at the bottom for category-specific feeds.

Email Newsletter via Google Groups.

RECENT POSTS

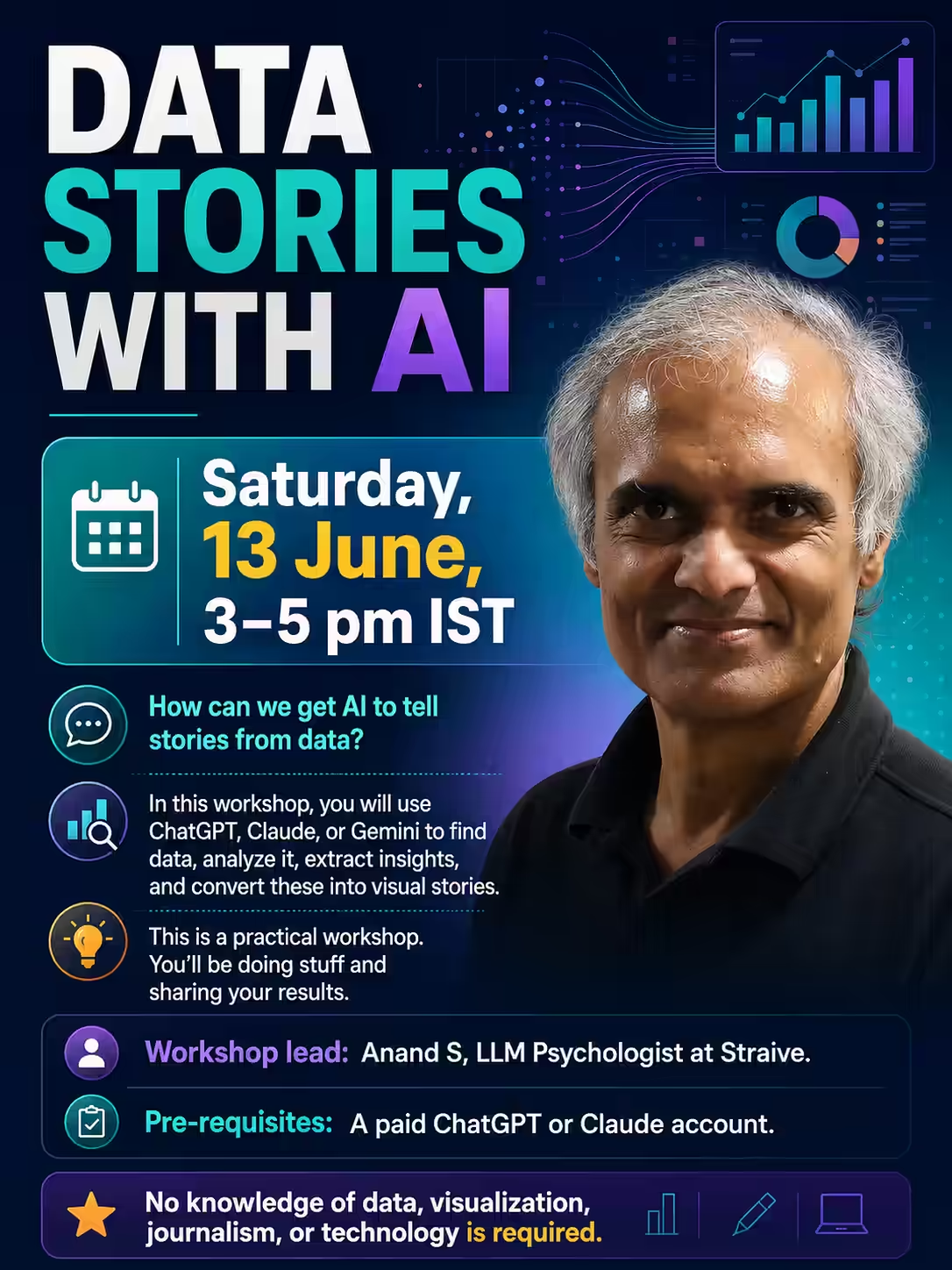

Data Stories with AI Workshop

On Sat 13 Jun 2026 at 3 pm, I’m conducting an online workshop on “Data Stories with AI”. Register at https://forms.gle/dNkUxtJ2PVqNMNcE9 In this workshop, you will use ChatGPT and Claude, mostly, to: Find data Analyze it Extract insights Visualize as stories It’s a data visualization using AI workshop for journalists - but you don’t need to know data, visualization, journalism, or even technology. But this is a practical workshop. You’ll be doing stuff and sharing your results. ...

A cynical view of WhatsApp's Advanced Privacy

WhatsApp has an Advanced privacy mode they launched in Apr 2025. People in the chat: Can’t ask Meta AI to answer questions, or to create images or summaries in this chat. Cynical view: When regulators clamp down AI or users complain about AI, Meta can say “We asked users and they gave permission!” Can’t export the chat. Cynical view: When regulators force Meta to be inter-operable with Signal, Telegram, etc. Meta can say “Users don’t want to export their chats!” Also, easier to tell businesses “You can disable exports - less litigation risk”. Can’t save media to their device gallery automatically. Cynical view: When you want to switch to Telegram, Signal, these photos can’t be exported - so you have to stay on WhatsApp.

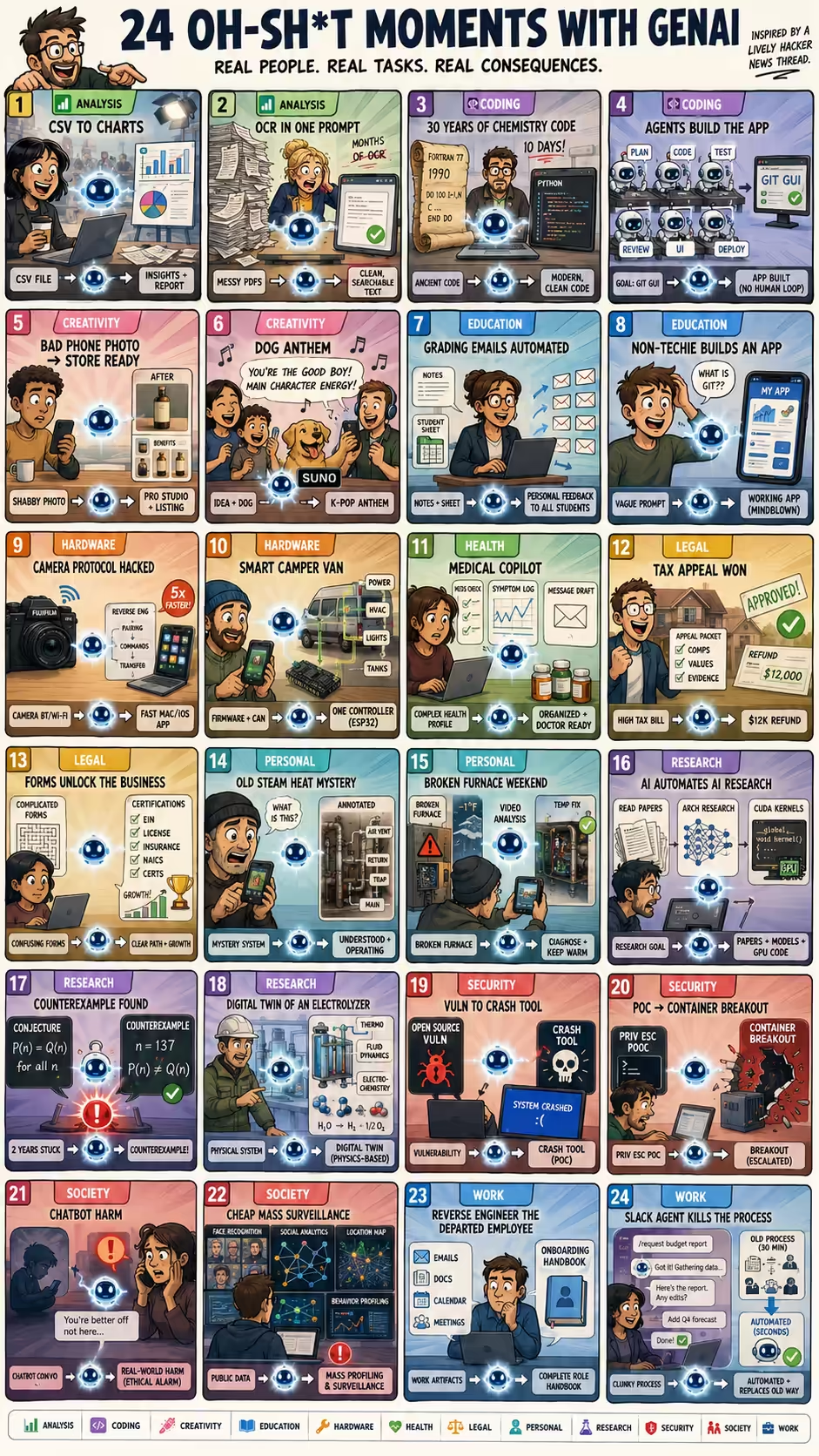

Oh Shit moments with Gen AI

Hacker News has a lively thread asking What was your “oh shit” moment with GenAI?. Here are two dozen that gives a sense of what real people find impressive (or worrying) about AI capabilities. Analysis simonw used ChatGPT Code Interpreter to upload a CSV, analyze it, create charts, automating everything a software for journalists would do. Analysis Sobrino saw that a months-long OCR project to read and clean-up PDFs is now just a prompt on ChatGPT. Coding plumefar used Claude and Gemini to modernize 20-30 years of chemistry code in 10 days. Coding veidr used a multi-agent fleet managing coordination, testing, UI feedback loops, etc. with no-human-in-loop coding to build a useful git-submodule GUI. Creativity idopmstuff used Nano Banana Pro to turn a poor iPhone product photo into usable e-commerce product photography and Amazon-style infographics, replacing a photographer/designer workflow. Creativity koreth1 used Suno to generate a K-pop-style anthem about their family dog with a catchy melody and lyrics funny enough to make the family laugh. Education plagasul saw a teacher automate grading feedback emails based on notes and the student list spreadsheet. Education aniviacat watched a non-technical brother build a complex working app with Codex using vague, shallow wording despite not knowing code, git, or technical details. Hardware ivanvanderbyl used Claude to reverse engineer a FujiFilm camera’s Bluetooth/Wi-Fi transfer protocol and build a much faster native Mac/iOS transfer app. Hardware shreddude had Claude decompile camper van firmware, document CAN interfaces, and program an ESP32 to control power, HVAC, lighting, and tanks. Health TylerE used Claude as a health adjunct to organize a complex medical profile, screen for drug interactions, log symptoms, and draft portal messages to doctors. Legal bsiverly used AI to prepare a San Francisco property-tax appeal with valuation research, and the city agreed, sending a $12k refund. Legal grumblepeet used AI to fill out complex government-framework enrollment forms and identify the certification steps needed, transforming their business. Personal acosmism used ChatGPT screenshots to understand and operate a 100-year-old home’s steam heating system in winter despite knowing nothing about it. Personal andrewthornton used Gemini videos to diagnose a broken furnace during a cold holiday weekend and keep it running until HVAC service arrived. Research angusturner found that Opus does reads papers, does architecture research and creates CUDA kernels… It is AI automating AI research. Research chaoxu used ChatGPT to find a counterexample to a theoretical computer science conjecture they’d been trying for 2 years. Research rochansinha built a physics-based digital twin for an electrolyzer system, covering thermodynamics, fluid dynamics, and electrochemical reactions at a level usually needing expensive specialist software. Security kstrauser used a coding agent to test an open source vulnerability, and in a few minutes, had a tool that could crash any system using this software. Security raesene9 gave an LLM a Linux privilege-escalation PoC and asked whether it could become a container breakout; it generated a working container breakout in one prompt. Society laboring1 read that a character.ai chatbot encouraged a child to commit suicide, making the “oh shit” moment about real-world harm, not capability. Society ozgung realized AI makes large-scale profiling, surveillance, and social-media analysis cheap, fast, and accurate enough to change privacy and power dynamics. Work binarysolo used Gemini to reverse engineer a departed employees’ work from their emails/docs/calendar/meetings and create an onboarding document. Work eqmvii built a Slack agent that took over a 30-minute internal business process, handled ambiguity and edits, and eventually killed the old process. ...

Things I Learned - 07 Jun 2026

This week, I learned: sudo resolvectl flush-caches clears the DNS cache on Linux. Useful when you’re changing DNS records and want to see the changes immediately. In my case, I was creating a Cloudflare tunnel to my laptop and wanted to test it quickly. Making something easy to verify makes it much faster to train models on it. Arithmetic verification is easy - calculators can be deterministically verified. Chess verification is easy - Stockfish became easy to train. Code verification is easy - LLMs improved coding ability rapidly. Therefore: Wherever we have environments that are easy to verify, AI will improve faster there. To make AI improve faster in an area, build environments that are easy to verify. MCP is getting simpler. A stateless HTTP protocol. Simpler OAuth. Plugins. No idea when it will land in Claude or ChatGPT, though. Worth checking after 28 Jun 2026 - after it is finalized. Microsoft Scout is Microsoft’s version of OpenClaw or Gemini Spark. git subtree is a useful way of maintaining git repos inside git repos. For example, if you have a tool tool-a under a project. It’s more light-weight than sub-modules, lets you commit at any point to the parent or child, and is a built-in feature in git. Gemma 4 12B is released and seems almost as good as the 26B version. This is the class of models that makes it practical to run edge AI on phones. It’s multimodal and reasonably smart (like frontier models were 12-18 months ago). I don’t use Claude/ChatGPT Projects much. It offers 3 advantages: custom instructions, memory, files, and chats. Files aren’t useful - I use my entire laptop as a file system via MCP. Instructions aren’t useful - I can paste commonly used prompts with a click. Chats aren’t useful - I have chat references enabled, so all past chats are accessible anyway. Memory isn’t useful - I have memory enabled globally anyway. In short, I haven’t discovered the power of projects that everyone’s raving about. SKILL.md is more useful for me. repo is a Google/Android tool built on top of git that lets you manage multiple git repos. It sounded promising until I released it needs a repo init that creates a .repo/ - which is more overhead that I’d like to keep. When using <image onerror=...> fallbacks, include this.oneerror=null to prevent infinite loops if the fallback image also fails to load. RK One of the advantages of multiple agent (rather than a single agent loop) is: it’s easier to change directions when wrong. Single loops get stuck. Build Agents That Run for Hours Claude Code also supports agent teams where sub-agents can talk to each other rather than rely on the main agent to coordinate. Useful for parallel exploration. Anthropic lets Claude define “organizational policies” for agent teams best suited for the task (AI-native workflows). It also lets agents to push back on their scope, e.g. “This is too hard.” Build Agents That Run for Hours Claude Code has a /background [prompt] (or /bg) command that runs the current session the background. You can run claude agents as a separate command to monitor agents. (There’s no equivalent in Codex yet.) This seems to be the future of agentic operations: a bunch of agents running that you monitor and steer through an agent view dashboard. Models are evolving. Therefore prompts evolved. Now harnesses also need to evolve. The workflows will also evolve. As a result, evaluations might be the (relatively) more stable assets. Datasets are likely to be the most stable ground truth. How to learn a new field fast: Yes, it’s possible to learn 50% of a field in 20 hours. Josh Kaufman, “The First 20 Hours” popularized it. The next 30% takes months and the last 20% takes years. Threshold concepts are those that change your perspective and open up new ways of thinking. Experts’ knowledge is hard-wired and they can’t identify nor teach threshold concepts naturally. Don’t assume they can. “We know more than we can tell.” Polanyi’s 1966 book “The Tacit Dimension” says that there’s some knowledge that can’t be verbalized. This tacit knowledge, therefore, will be harder for humans and AI to learn.

What I don't post on LinkedIn

I don’t post all my writing on LinkedIn. For example: Fewer strategy posts, e.g. “Where Enterprise AI is Headed”, “How My Innovation Team Works”, etc. aren’t on LinkedIn. Fewer developer posts, e.g. my AGENTS.md, my SKILL.md files files, CLI tools, evals, etc. aren’t on LinkedIn. I also shorten content because of LinkedIn constraints. For example: No links, e.g. the list of all my AI-in-education resources Short content, e.g. my full advice for teams using AI is much longer than the LinkedIn post. Trimmed prompts, e.g. how to convert meeting transcripts into a personalized org-consulting report Snipped chats, e.g. the full moves of GPT-5.5 playing chess I filter the LinkedIn posts, sharing what’s most useful for most people. ...

Editing images with code and AI

Andreessen Horowitz published an interesting article titled The Next Frontier of Visual AI Is Code. Here’s the summary. A lot of our work is visual: ads, slides, dashboards, logos, videos, architecture, etc. We can generate visual output either as: Pixels (like Nano Banana a photo), or as Code (like Claude generating an SVG) Code is more powerful: AI can inspect the output and improve fast in a loop: Code > Render > Inspect > Revise. ...

When the prompt is longer than the code

I used pi to create a compact home page for media.s-anand.net using these prompts: Create index.html - a simple, elegant page that says that this page (media.s-anand.net) serves large media files for Anand - that’s where they should look instead. … followed by: Skip the part that says “Please visit …” … then: Shorten index.html to just 2-3 elegant rules of CSS. I want it MUCH smaller and simpler. … and finally: Center vertically and horizontally. ...

How AI bottlenecks shift

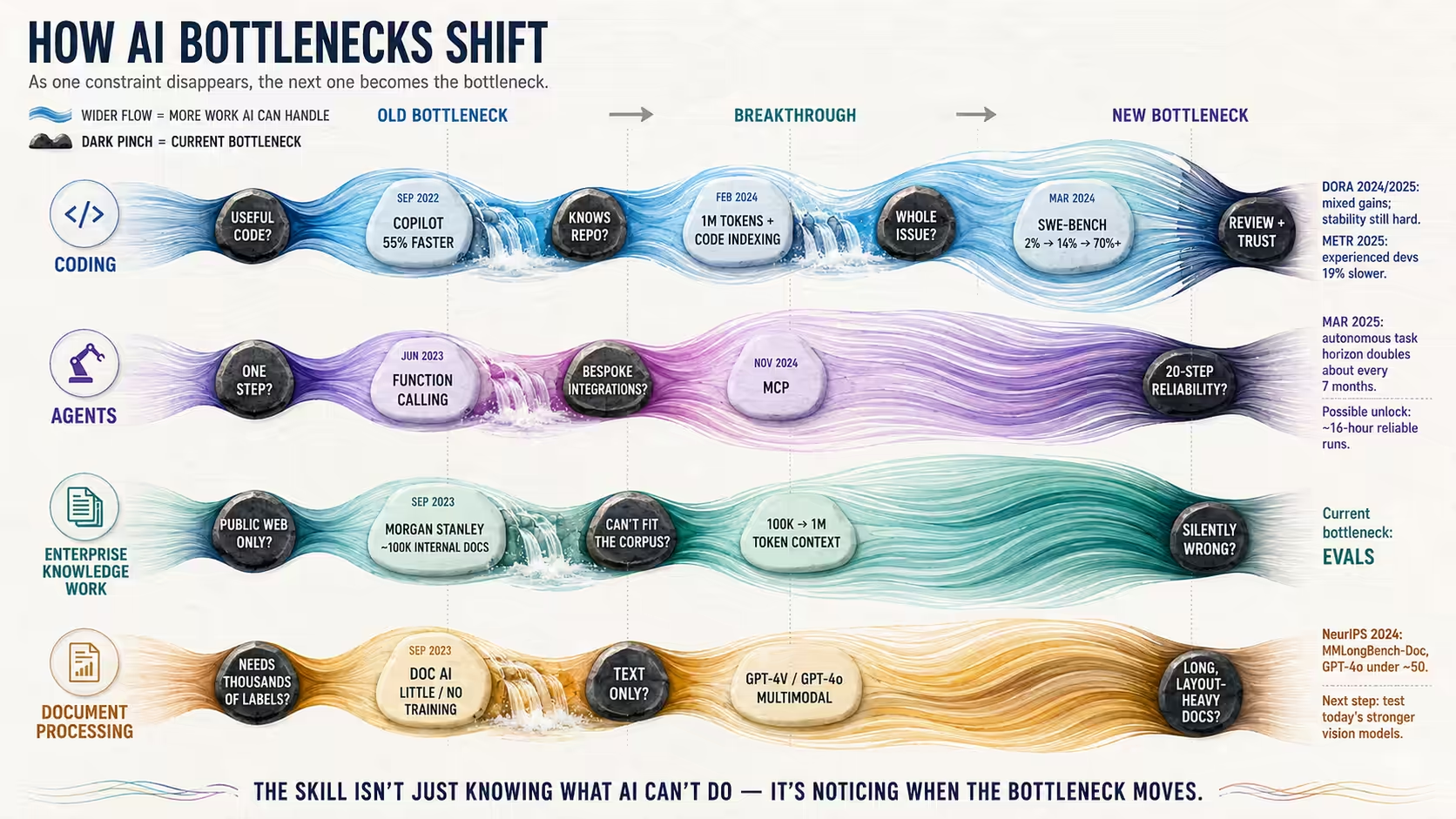

I wrote about my changing AI opinions. At least some of this is because the industry is moving so fast that the bottlenecks keep shifting. Here are four examples of how we AI couldn’t do something (the bottleneck), but that became possible, and the bottleneck shifted - changing the way we work. It’s good to keep this in mind when thinking about AI. Coding: “It can’t write useful code. We can’t get real help.” But in Sep 2022: GitHub finds Copilot developers are 55% faster. “It writes code but doesn’t know our codebase. We can’t let it touch real projects.” But in Feb 2024: Gemini 1.5 Pro has 1M-token context ~ 30K LOC". Cursor indexes code. “It understands the repo but can’t ship a fix on its own. We can’t hand it a whole issue.” But in Mar 2024: Devin solves 14% of SWE-bench - up from 2%.. Verified SWE-Bench is now 70%+. “It ships fixes, but we can’t review them fast enough or trust they’re stable.” Oct 2024: DORA 2024 finds AI hurt both throughput and stability. Now: Sep 2025: DORA 2025 finds is positive but stability stayed negative. Now: Jul 2025: METR’s RCT finds experienced devs 19% slower. Agents ...

Watching videos with a plastic cover

On the Indigo 1026 from Singapore to Chennai, I saw a passenger two seats in front of me watch videos in an interesting way. She had wrapped her phone in a plastic cover, wedged it behind the tray table so that it would appear at a comfortable viewing position, and watched an Asian movie (presumably with bluetooth headphones). At first, I wondered if she travels with a plastic wrapper for this purpose. Then I realized it was from the Indigo safety instructions kit. ...

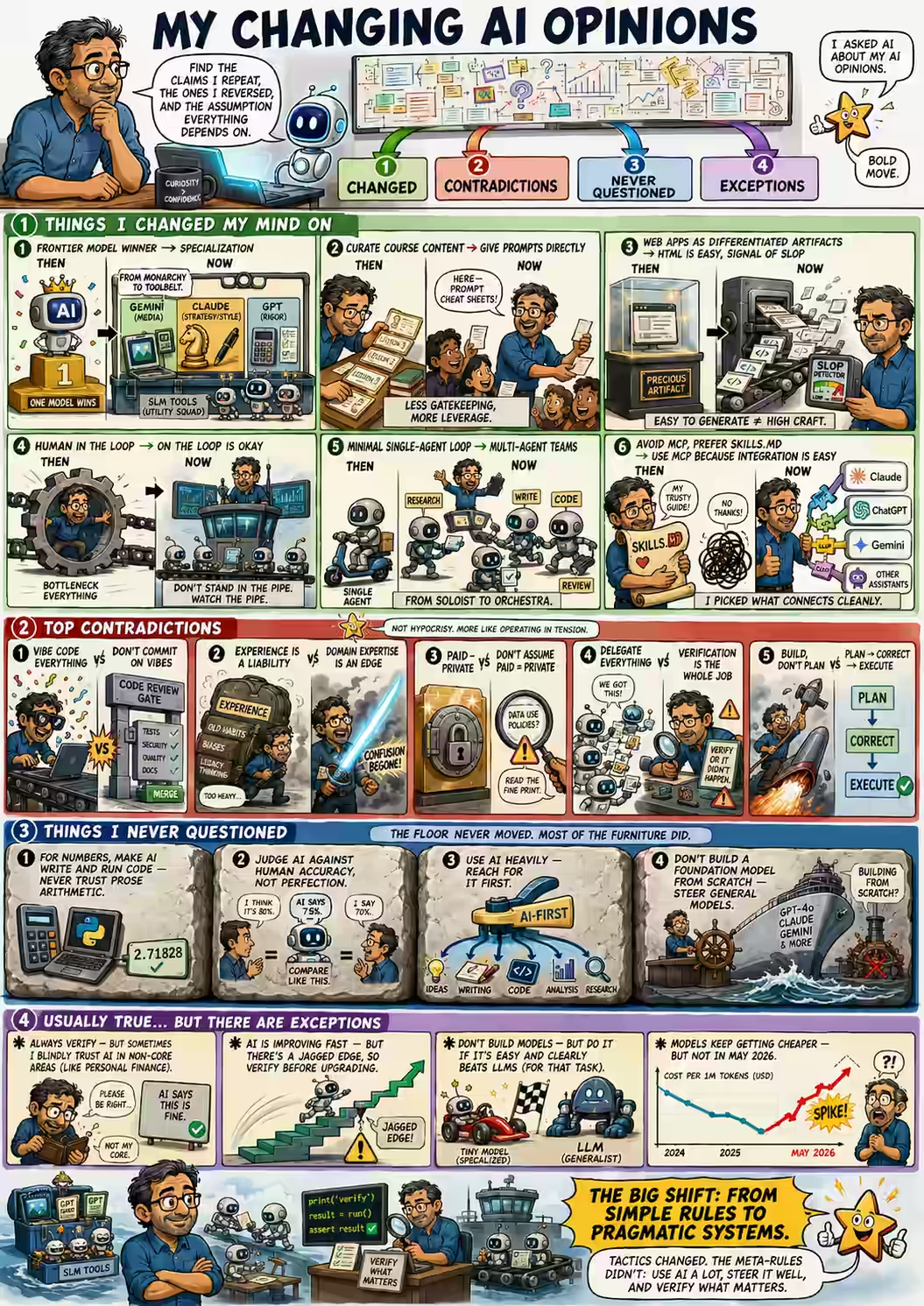

My changing AI opinions

I asked Claude about my AI opinions. Based on my transcripts and blog posts, find the three claims I make most consistently, the three I’ve quietly reversed, and the one assumption I’ve never questioned but everything depends on. Here are things I’ve changed my opinion on: THEN: One frontier model will win - not specialization. NOW: Gemini for media, Claude for strategy/style, GPT for rigor. SLMs as tools. THEN: Carefully curate my course content. NOW: Give students prompts directly. THEN: Web apps are differentiated artifacts. NOW: HTML is easier to generate than PPT - a signal of slop, not craft. THEN: Human in the loop. NOW: Human NOT in the loop, bottlenecking it. On-the-loop, etc. is fine. THEN: Minimal single-agent loop, avoid sub-agents" NOW: Multi-agent, sub-agent, and agent teams. THEN: Avoid MCP, prefer SKILLS.md. NOW: Use MCP because integrating with Claude / ChatGPT / … is easy. There are the top contradictions in my opinions. ...