Google’s Protocol Buffers is a “language-neutral, platform-neutral, extensible mechanism for serializing structured data – think XML, but smaller, faster, and simpler”

XML is slow and large. There’s no doubting that. JSON’s my default alternative, though it’s a bit large. CSV’s ideal for tabular data, but ragged hierarchies are a bit difficult.

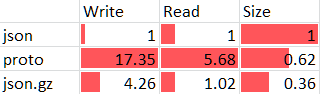

I was trying to see if Protocol Buffers would be smaller and faster, at least when using Python. I took JSON as the base, and checked the write speed, read speed and file sizes. Here’s the comparison:

Protocol Buffers are 17 times slower to write and almost 6 times slower to read than JSON files. File sizes are smaller, but then, all it takes is a simple gzip operation to compress the JSON files even smaller. Reading json.gz files is just 2% slower than JSON files, and writing them is only 4 times slower.

The code base is at https://bitbucket.org/sanand0/protobuftest

On the whole, it appears that GZipped JSON files are smaller, faster, and just as simple as Protocol Buffers. What am I missing?

Update: When you add GZipped CSV to the mix, it’s twice as fast as GZipped JSON to read: clearly a huge win. It’s only slightly slower to write, and but compresses a tiny bit more than JSON.

Comments