I’m been using a shared hosting service with 100 WebSpace over the last 7 years. It’s an ad-free account that offers 100MB of space and 3GB of bandwidth per month. Things were fine until two months ago, which was when my song search engines started attracting an audience. I had anticipated that I might run out of bandwidth, so I used a different server (that has 5GB of bandwidth per month quota) for loading the songs. But what I didn’t anticipate whas that my server load would run over the allotted CPU limit.

You’d think this is unusual, given how cheap computing power is, and that I’d run out of bandwidth quicker. But no. My allotted limit was 1.3% of CPU usage (whatever that meant), and about 2 months ago, I hit 1.5% a few times. I upgraded my account to one which had a 2.5% limit immediately, but the question was: why did this happen?

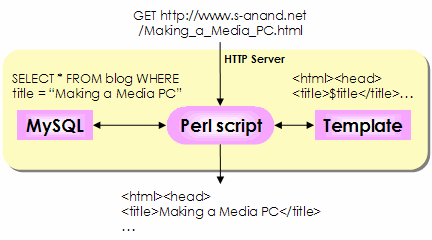

This blog uses a lot of Perl scripts. I store all articles on a MySQL database. Every time a link is requested, I dynamically generate the HTML by pulling up the article from the MySQL database and formatting the text based on a template.

I also use MySQL to store users’ comments. Every time I display each page, I also pull out the comments related to that page.

I can’t store the files directly as HTML because I keep changing the template. Every time I change the template, I have to regenerate all the files. If I do that on my laptop and upload it, I consume a lot of bandwidth. If I do that on the server, I consume a lot of server CPU time.

Anyway, since I’d upgraded my account, I thought things would be fine. Two weeks ago, I hit the 2.5% limit as well. No choice. Had to do something.

If you read the O’Reilly Radar Database War Stories, you’ll gather that databases are great for queries, joins and the like, while flat files are better to process large volume data as a batch. Since page requests come one by one, and I don’t need to do much batch processing, I’d gone in for a MySQL design. But there’s a fair bit of overhead to each databasse query, and that’s the key problem. Perl takes a while to load (and I suspect my server is not using mod_perl). The DBI module takes a while to load. Connecting to MySQL takes a while. (The query itself, though, is quite fast.)

So I moved to flat files instead. Instead of looking up from a database, I just look up a test file using grep. (I don’t use Perl’s regular expression matching because regular expression matching in UNIX is faster than in Perl.) I have a 1.6MB text file that contains all my blog entries.

But looking up a 1.6MB text file takes a while. So I split the file based on the first letter of the title. So this post (Reducing the server load) would go under a file x.r.txt (for ‘R’) while my last post (Calvin and Hobbes animated) would go under a file x.c.txt (for ‘C’). This speeds up the grep by a factor of 5-10.

On average, using MySQL query used to take 0.9 seconds per query. Now, using grep, it’s down to about 0.5 seconds per query. Flat files reduced the CPU load by about half. (And as a bonus, my site has no SQL code. I never did like SQL that much.)

So that’s why you haven’t seen any posts from me the last couple of weeks. Partly because I didn’t have anything to say. Partly because I was forced to revamp this site.

Comments