Tools in Data Science has a remote online exam (ROE). It has a tough reputation. We conducted one today.

Here’s how today’s ROE unfolded.

The TAs had created 13 questions and shared it with me yesterday. This morning, I tried solving them.

At first glance, it looked scarily hard! But I just jumpted down a few questions, and found that five questions were trivial, i.e. I just used the “Ask AI” button to copy the question into ChatGPT and it gave me the answer.

- 🟢 06. Layered Encoding Challenge (2.0) is one-shot: ChatGPT

- 🟢 07. Region Containing Point (1.0) is one-shot: ChatGPT - see second chat

- 🟢 10. Fix Broken JSON File (1.0) is one-shot: ChatGPT

- 🟢 11. Cross-entity disambiguation (1.0) is one-shot: ChatGPT

- 🟢 13. Record Terminal Session with asciinema (0.5) is one-shot: ChatGPT

For another four, I needed to just make sure I uploaded the files or HTML:

- 🟡 03. Regex Golf Challenge (2.0) is one-shot but it’s better to download and upload the text files, maybe, than copy-paste: ChatGPT

- 🟡 04. Maze Solver with Constraints (2.0) is one-shot but needs you to upload the image and a good vision model: ChatGPT

- 🟡 05. Cipher Trail (2.0) is one-shot but needs you to provide the secret HTML: ChatGPT

- 🟡 12. Simple Question (0.5) needs you to provide the secret HTML: ChatGPT

Two questions confused ChatGPT a bit, and it needed some nudges. There is real learning here.

- 🟠 08. Reorganize Files with Shell Commands (1.0) is hard because of Unicode issues and the extra README.md students should delete. Asking for variations is the learning: ChatGPT

- 🟠 09. Refactor Python Code with VS Code (1.0) takes few attempts (question is imperfect, intentionally) but error messages are excellent, so iterative feedback is the learning: ChatGPT

One was pretty hard and ChatGPT struggled with it.

- 🔴 02. Korean Speech Dataset API Validation (5.0) actually requires work. At first, ChatGPT refused ethically. When reframed, it tried, but the human-in-the-loop (me) was too slow. So I used Codex, which literally hacks towards the solution! It searched online for existing solutions, read the GitHub discussions for this topic, found my browser tab and started testing itself, … and solved it in 10.5 min!

This leaves the one question that AI can’t solve:

- 🔴 01. Collaborative Token Exchange (5.0) asks you to collect “tokens” from other people and share it. I asked Codex to hack it, but after an hour (of logging into my personal account, my IITM account, even my father’s account, exhausting my token limits, and totally psyching me), it declared the question unhackable.

Based on this, the instructors, teaching assistants and I decided that:

- This exam is too easy with AI help. Combined with collaboration, it’s ultra-easy. Therefore, let’s add some old questions:

- Thanks to Project 1, people already collaborate at scale. So let’s ask for 500 tokens instead of 100.

We deployed the exam at 12:00 pm IST, an hour before the scheduled time.

The hackers (e.g. who scan the source code, or change their system clocks) could see the questions earliy and started sharing and solving them.

The TAs said, “Anand, shall we add more questions to make it tougher?”

I said, “No, it’s OK. The ROE has built a reputation for difficulty. Let that change. Let them have an easy exam.”

“If they’re going to split this in groups and have coding agents solve it, they’ll score full marks in 10-15 minutes,” I said to myself.

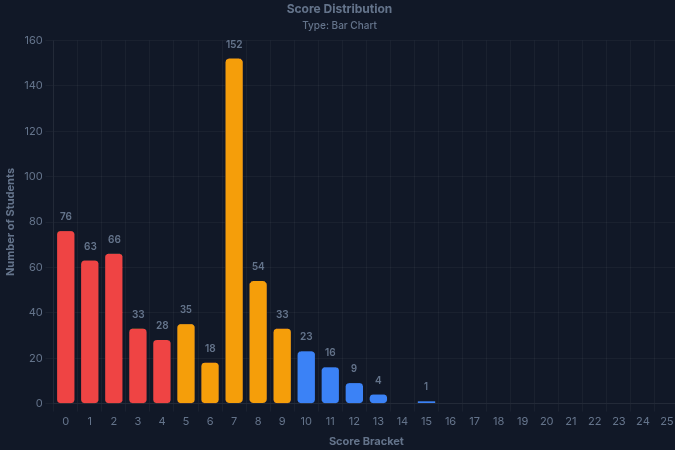

When the exam ended, this was the score distribution.

There were several surprises here. Firstly, 9 questions were repeats. Yet, barring one question, they scored lower, though they appeared in equally tough ROEs in the past.

| # | Question | % | Previous % | Previous exam |

|---|---|---|---|---|

| 14 | ⚪ FastAPI Time Series Caching | 8% | 39% | 2025 Sep ROE |

| 15 | ⚪ AI Video Attendee Extraction | 12% | 64% | 2026 Jan GA3 |

| 11 | 🟢 Cross-entity disambiguation | 13% | 64% | 2026 Jan GA4 |

| 7 | 🟢 Region Containing Point | 15% | 38% | 2025 Sep ROE |

| 9 | 🟠 Refactor Python Code with VS Code | 17% | 57% | 2026 Jan GA1 |

| 13 | 🟢 Record Terminal Session with asciinema | 21% | 66% | 2026 Jan GA1 |

| 10 | 🟢 Fix Broken JSON File | 30% | 64% | 2026 Jan GA1 |

| 12 | 🟡 Simple Question | 41% | 64% | 2026 Jan GA1 |

| 8 | 🟠 Reorganize Files with Shell Commands | 53% | 38% | 2026 Jan GA1 |

Just as surprisingly, they scored higher than these on 3 of the 5 new questions:

- 59%: 🟡 03. Regex Golf Challenge (2.0) (253 / 430)

- 59%: 🟡 04. Maze Solver with Constraints (2.0) (252 / 430)

- 54%: 🟡 05. Cipher Trail (2.0) (233 / 430)

One new question ended up being almost the hardest question - despite it being one-shot-table for ChatGPT (GPT 5.4, extended thinking).

- 2%: 🟢 06. Layered Encoding Challenge (2.0) (9 / 430)

The toughest, though, had a 1% success rate. Though Codex could solve it in 10 min, it’s a genuinely hard question.

- 1%: 🔴 02. Korean Speech Dataset API Validation (5.0) (4 / 430)

Which leaves us with the collaboration question - the one that AI can’t solve.

- 31%: 🔴 01. Collaborative Token Exchange (5.0) (132 / 430)

This question is a whole new dynamic altogether. There were about 5 clear clusters of students, ranging from 5 - 35 students, who were collaborating. They were trading bundles of tokens between themselves. There were a few “super-collaborators” who were doing the bulk lifting. But even with this, the largest correct submission had 84 tokens. The strongest submission was a 51-token submission that 6 students submitted.

(I need to study this far more!)

Yet, far smaller than even the original 100 token target I had set. Clearly, they aren’t collaborating enough.

It’s surprising how little students were using the “Ask AI” button.

- 🔴 ~100 students didn’t use it at all.

- 🟠 ~100 students clicked on it JUST once.

- 🟡 ~100 students used it just 2-5 times. For 15 questions, that’s clearly low.

- 🟢 ~100 students used it 6-20 times. That’s OK

- 🔵 ~15 students used it 20+ times. (1 clicked on it 45 times. Clearly loves AI.)

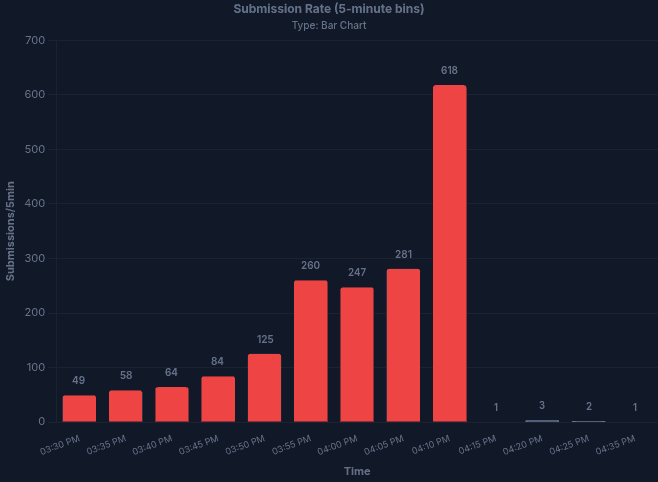

Finally, most students saved their results for the first time just before the deadline.

The problem is that their system clocks were off, so they got a “late submission” error.

BTW, some students used a timing trick for hacking. By setting their system clock late, they can see the exam questions before release. But the submissions are checked only against the server clock. So, waiting until the last minute to save is a terrible idea. That hurt some students.

Based on this, here’s what I learnt:

Pressure makes a difference. In past exams, with similar time pressure, students solved the same questions much better. I think they panic-ed on the first two questions. To be fair, so did I, when I saw them. That’s why I started solving from the bottom.

LESSON 1: Scan end-to-end. Solve quick-wins (high impact, low effort) problems first.

We have no clue what’s easy or tough. When different students are using different tools, what’s easy for ChatGPT might be hard for Claude and vice versa. Without knowing tool capabilities and usage, this is hard to assess.

LESSON 2: With AI, no one knows what’s easy or hard. Try for yourself.

They aren’t using AI enough. Our advice is to use the “Ask AI” button every time. Half the students barely used it once.

LESSON 3: Use AI first. Focus on what AI can’t do well

They aren’t collaborating enough. The collaboration question was designed to encourage collaboration. Yet, the largest bundle of tokens shared was 84, far smaller than the 500 token target.

LESSON 4: Make friends with classmates. Work together. It helps: now, and in the future.

That’s worth repeating:

- Scan end-to-end. Solve quick-wins (high impact, low effort) problems first.

- With AI, no one knows what’s easy or hard. Try for yourself.

- Use AI first. Focus on what AI can’t do well.

- Make friends with classmates. Work together. It helps: now, and in the future.